Home › How Fintech Teams Ship Faster with GDPR-Compliant Test Data

How Fintech Teams Ship Faster with GDPR-Compliant Test Data

Fintech teams are under constant pressure to release faster, while compliance, security, and privacy expectations keep rising. In regulated environments, that tension shows up most clearly in test data.

Teams need realistic data for development, QA, integration, and regression testing. But using production data in lower environments creates obvious risk. Even when that data is masked or partially transformed, the process is often slow, manual, and difficult to scale.

That is why GDPR-compliant test data has become a practical engineering issue, not just a legal one.

For fintech teams, the challenge is simple: how do you get compliant test data fast enough to support CI/CD, without exposing production data or weakening the usefulness of the test environment?

Why fintech teams struggle with test data in CI/CD?

Modern fintech delivery depends on speed. Teams ship changes to onboarding flows, payments journeys, fraud rules, and internal APIs on increasingly short cycles. But the test-data workflow often still runs on an older model.

A team needs data. A request is raised. Another team exports, masks, or prepares a subset. The result arrives days later. Then someone finds stale relationships, missing edge cases, or broken joins, and the cycle starts again.

That creates a structural gap between how fast a fintech team wants to move and how fast its testing infrastructure allows it to move.

It also creates risk. Financial firms still operate under high expectations around resilience, security, and safe handling of sensitive data under frameworks such as DORA, PCI DSS, and GDPR Article 32. DORA applies to financial entities from 17 January 2025, and GDPR Article 32 explicitly refers to appropriate technical and organisational measures, including pseudonymisation and encryption where appropriate.

What GDPR-compliant test data should actually do?

Good GDPR-compliant test data is not just data that looks safer than production. It should help fintech teams do four things at once:

- protect personal and payment-related information

- support realistic testing

- preserve business logic and system relationships

- arrive quickly enough to keep delivery moving

That last point matters more than many teams expect.

A dataset can be privacy-safer than production and still be poor test data if it is late, incomplete, inconsistent, or hard to recreate. For fintech teams, usable test data needs to be both safe and operationally reliable.

Why copied production data slows compliant delivery?

Many organizations still rely on production-derived datasets for testing. Sometimes that means copying production and masking selected fields. Sometimes it means pseudonymizing records and tightly controlling access. In both cases, teams often inherit the same core problem: the workflow remains dependent on production history, manual intervention, and repeated coordination.

That creates friction exactly where fintech teams need speed.

DATAMIMIC’s Tier-1 European Bank case study is the strongest internal proof asset for this page. It describes a regulated banking environment where masked snapshots took 20–28 days to prepare and later dropped to 6–12 days after the shift, while parallel execution rose to 90%.

That is the value of better test-data infrastructure. It is not just about reducing privacy risk. It is about removing the delivery bottlenecks that slow release cycles.

Why referential integrity matters in fintech testing?

For financial products, realistic values alone are not enough.

A test environment also needs to preserve referential integrity across customers, accounts, transactions, documents, and downstream messages. If those relationships drift, the test may appear to pass while the underlying workflow no longer reflects how the system behaves in practice.

That is why teams evaluating test data for databases, APIs, and event-driven services need more than field-level masking. They need an approach that preserves logic across the wider ecosystem.

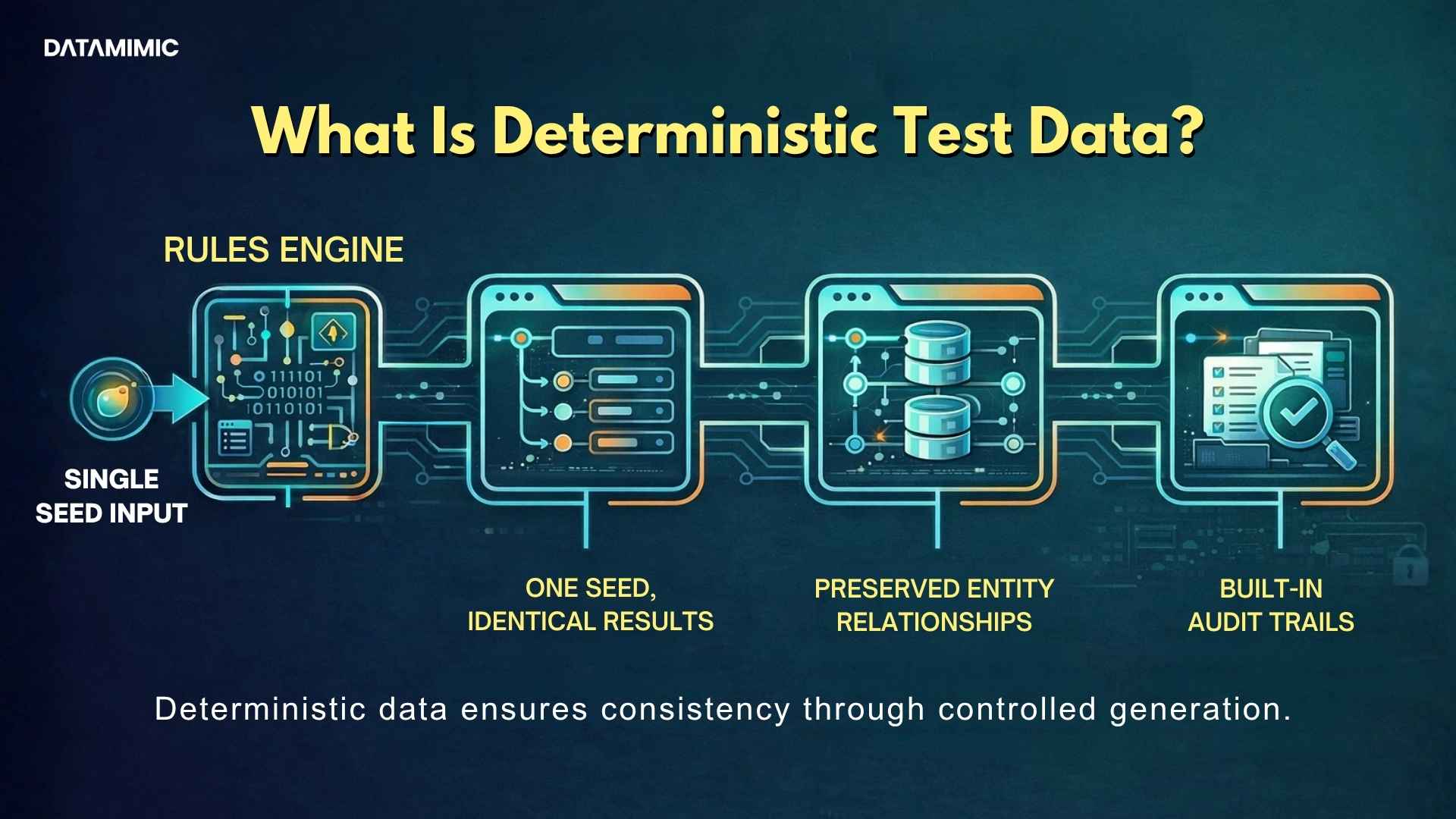

This is where deterministic test data becomes relevant. When the same rules can produce the same outputs repeatedly, teams get test data that is easier to rerun, validate, and debug.

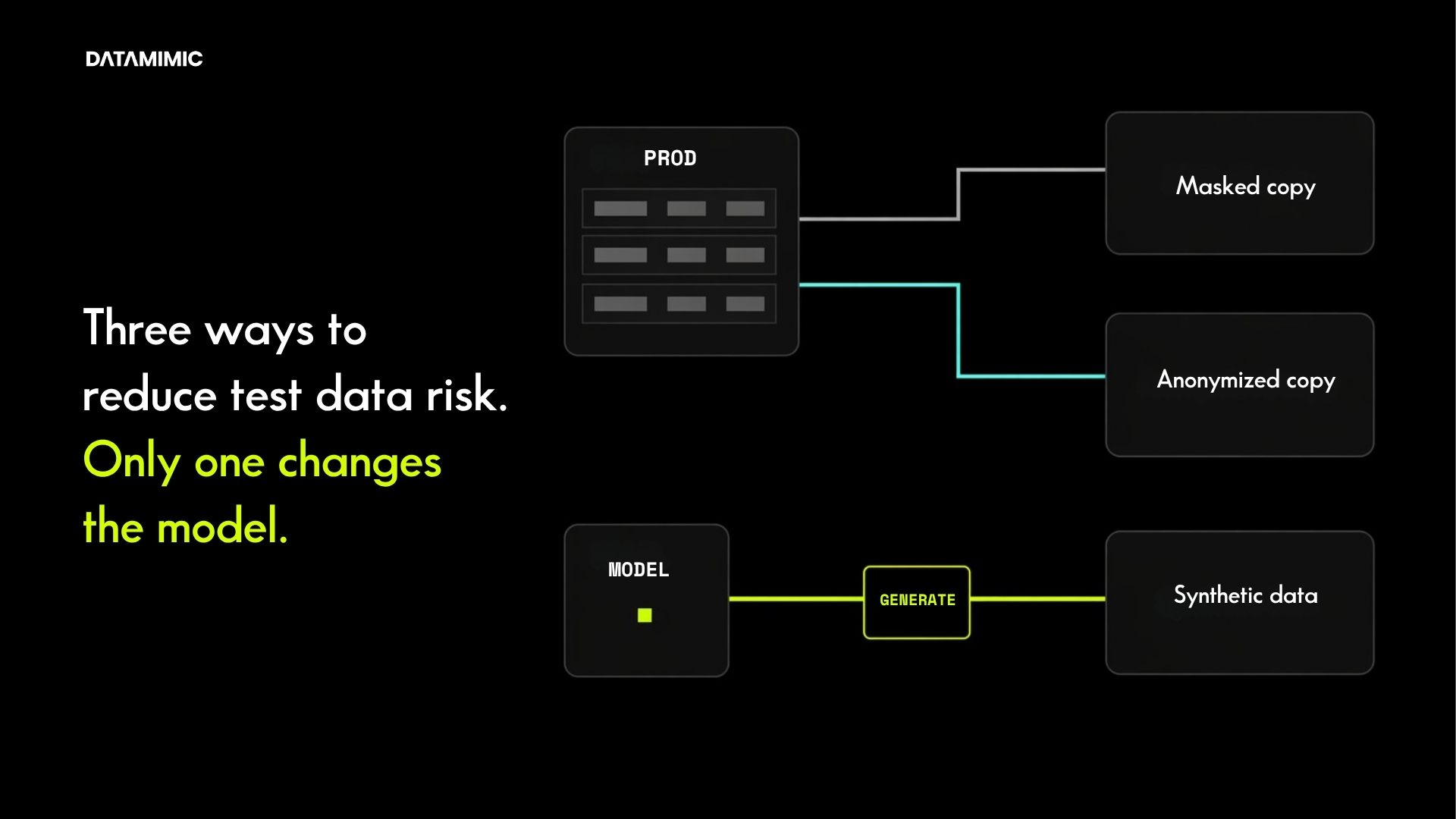

Data masking vs synthetic data for regulated fintech teams

There is still a place for data masking and anonymization. Some teams need transitional controls around existing data flows, or targeted privacy protection in specific environments.

But many fintech teams eventually reach a point where masking no longer solves the operational problem. It may reduce exposure, yet still leave them with slow refreshes, inconsistent data, and recurring maintenance work.

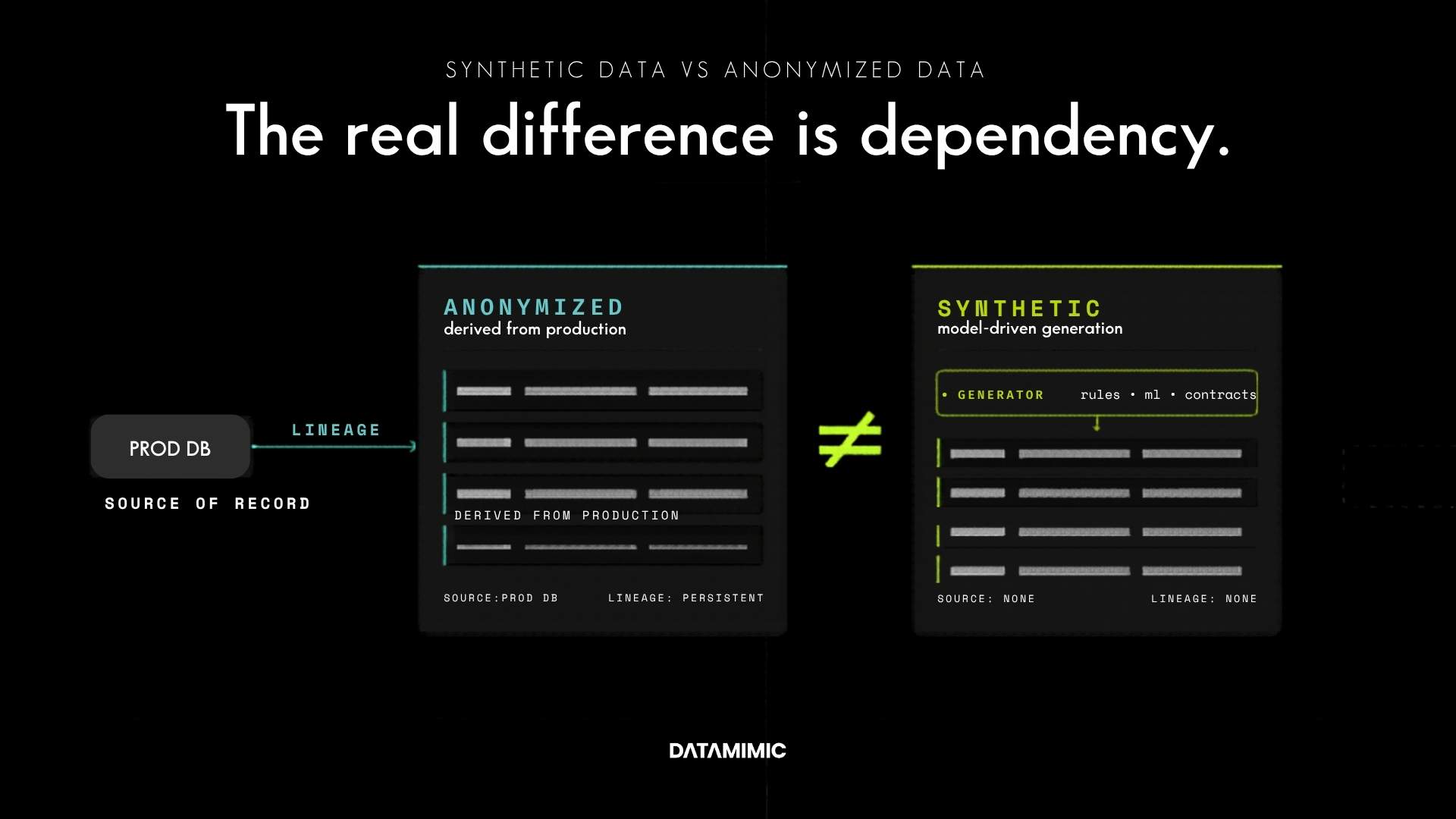

That is why the more useful comparison is often synthetic data and anonymized data, especially when the goal is to support delivery at scale without relying on copied production identities.

Why pseudonymisation alone is not always enough?

This is also where language matters.

The ICO explains that pseudonymised data can still be personal data if it can still be attributed to a person using additional information. It also says pseudonymisation can reduce risk and support compliance, but it is not a universal shortcut to “safe to use anywhere.”

For fintech teams, that is an important distinction. A privacy measure can reduce risk while still leaving governance, access-control, and operational burdens in place.

Why on-demand test data in CI/CD matters?

The most useful test data is not the dataset that arrived last week. It is the dataset a team can generate when it needs it.

That is why on-demand test data matters so much in CI/CD. When compliant data generation becomes part of delivery, teams stop treating test data as a separate approval queue and start treating it as engineering infrastructure.

DATAMIMIC’s feature documentation highlights GDPR compliance, data anonymization and pseudonymization, advanced JSON/XML handling, and seamless integration. Its API documentation says the API supports integration into CI/CD pipelines for generation, obfuscation, and processing workflows.

For fintech teams, that means compliant test data can become faster to provision, easier to maintain, and more reliable for regression and integration testing.

Why modern fintech test data is not only about databases?

Modern fintech systems are rarely limited to flat relational tables. They span APIs, nested payloads, event streams, document stores, and multiple schemas evolving at once.

That is why test-data quality often breaks down at the edges.

DATAMIMIC’s documentation specifically highlights support for complex and deeply nested JSON and XML structures, hierarchical data modeling, and generation across intricate enterprise structures.

And in live streaming scenarios, the adjacent need may be operational anonymisation rather than lower-environment synthetic test data. DATAMIMIC’s ACI Worldwide case study describes real-time anonymisation at scale for millions of payment records per hour, with deterministic consistency and audit-ready reporting.

That strengthens credibility for regulated payments workflows, even though lower-environment synthetic test data remains the main focus of this page.

What fintech teams should look for in a compliant test-data approach?

A strong approach should help teams:

- avoid exposing live personal and payment data in non-production environments

- generate realistic but safe data quickly

- preserve integrity across systems

- support repeatable test runs

- handle structured and nested data

- fit naturally into automated delivery workflows

That is also why this page should link to data protection software. Buyers evaluating compliant delivery often move from educational content to solution evaluation, and that page is the natural commercial bridge.

Compliant test data should support speed, not block it!

Fintech teams should not have to choose between speed and compliance.

The better path is to replace slow, production-dependent workflows with test data that is safe, reproducible, and operationally useful. When teams can generate compliant test data on demand, preserve integrity across systems, and keep lower environments privacy-safe, delivery becomes faster without becoming riskier.

For readers comparing approaches, the next steps are clear: review deterministic test data, compare data masking and anonymization, explore synthetic data and anonymized data, and connect that evaluation to broader data protection software decisions for regulated engineering teams.

Alexander Kell

August 7, 2025

Contact Us Now

Facing a challenge with your test data project? Let’s talk it through. Reach out to our team for personalized support.

We’ve received your submission and will be in touch shortly