Home › Real-Time Data Masking in Kafka Streams for Payment Systems

Real-Time Data Masking in Kafka Streams for Payment Systems

Payment systems built on Apache Kafka process millions of transactions carrying PAN, cardholder names, CVVs, and account identifiers. That data replicates across topics, fans out to consumer groups, lands in analytics pipelines and non-production environments — and every copy is a compliance liability. For modern payment architectures, real-time data masking has become a baseline requirement for cardholder data protection and Kafka data privacy under PCI DSS 4.0, GDPR Art. 25, or DORA (EU Digital Operational Resilience Act).

This post focuses on anonymization for non-production use cases: testing, analytics, partner integrations, and resilience exercises. For protecting production payment data in flight, encryption (CSFLE (Confluent’s Client-Side Field-Level Encryption), FPE) and access control remain the primary controls.

Why Kafka Data Privacy Is Harder Than Batch Masking

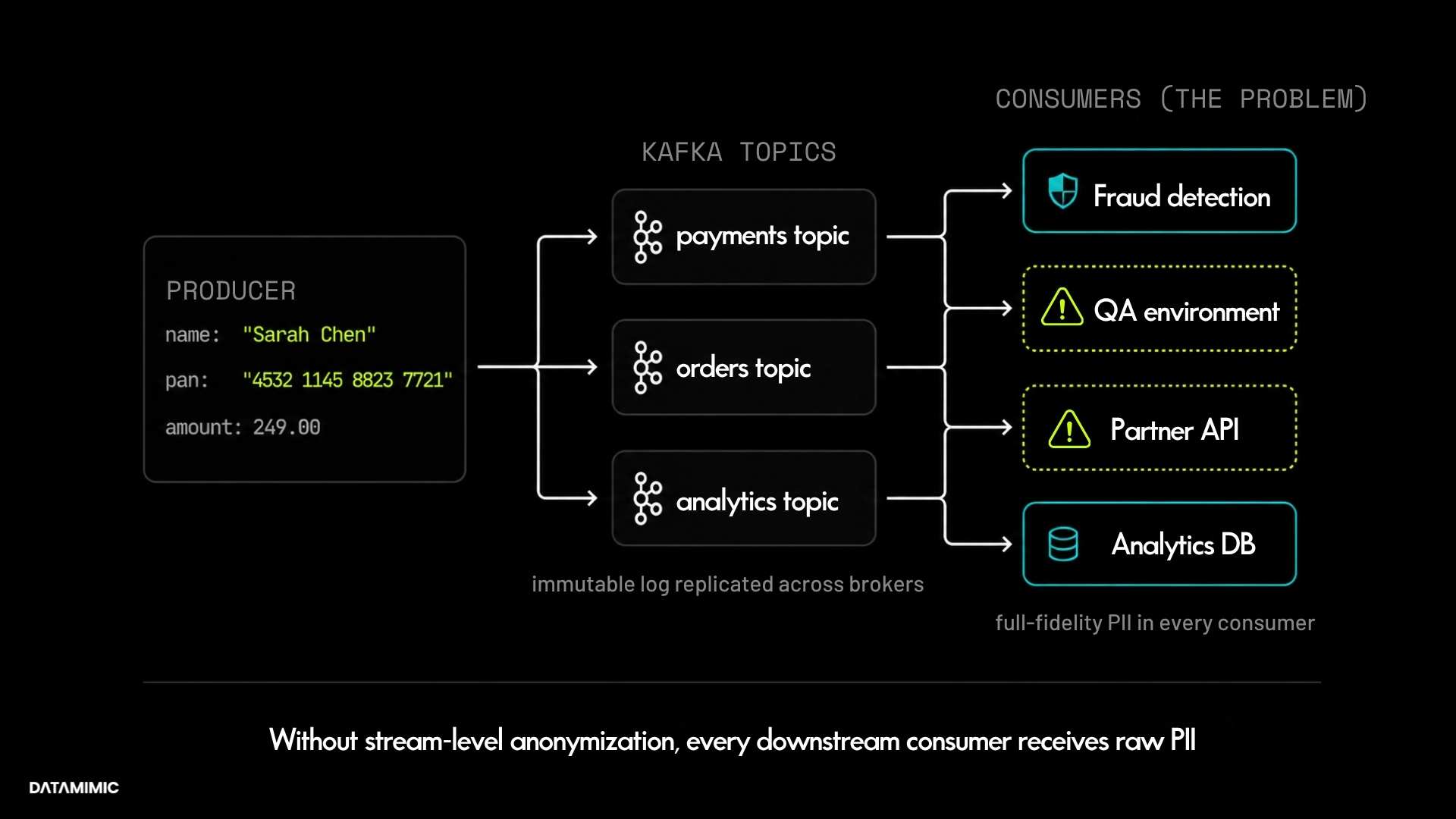

Kafka’s architecture makes cardholder data protection harder than in traditional batch systems. The append-only immutable log means sensitive records persist until retention policies expire. Topic replication spreads PII across brokers automatically. And while the Kafka ecosystem has matured — CSFLE, Schema Registry governance, SMT (Single Message Transform)-based masking, and data contracts all address parts of the problem — Apache Kafka itself still does not provide native, broker-level de-identification or policy-driven masking capabilities. As Confluent’s own documentation acknowledges, achieving GDPR, CCPA, or PCI DSS alignment requires additional tooling on top of Kafka. Consumer groups downstream — fraud detection, reporting, QA environments, partner integrations — each receive full-fidelity records unless anonymization is applied upstream.

For teams dealing with GDPR Kafka compliance, this creates operational challenges that traditional batch-oriented governance models were not designed to handle. Sensitive records may propagate across replicated topics, downstream consumers, and non-production environments long before retention windows or deletion workflows take effect.

PCI DSS 4.0 Requirements 3 and 4 mandate that stored account data must be rendered unreadable (encryption, truncation, masking, or hashing) and that PAN must be encrypted during transmission. GDPR’s right to erasure adds another layer of complexity — while Kafka’s immutable log makes traditional deletion difficult, teams in practice address this through crypto-shredding (destroying encryption keys to render data unreadable), tombstone events, retention minimization, and pseudonymization. None of these are trivial in a high-throughput streaming environment.

For Kafka-based payment pipelines, this means data protection must happen at the stream level — either at the producer before the message enters the topic, within a stream processor (Kafka Streams, Flink), or at a gateway/proxy layer before consumers receive the payload. Applying it after the fact, on data already sitting in non-production databases, leaves PII exposed across systems you may not fully control.

Data Anonymization Techniques for Streaming Payment Systems

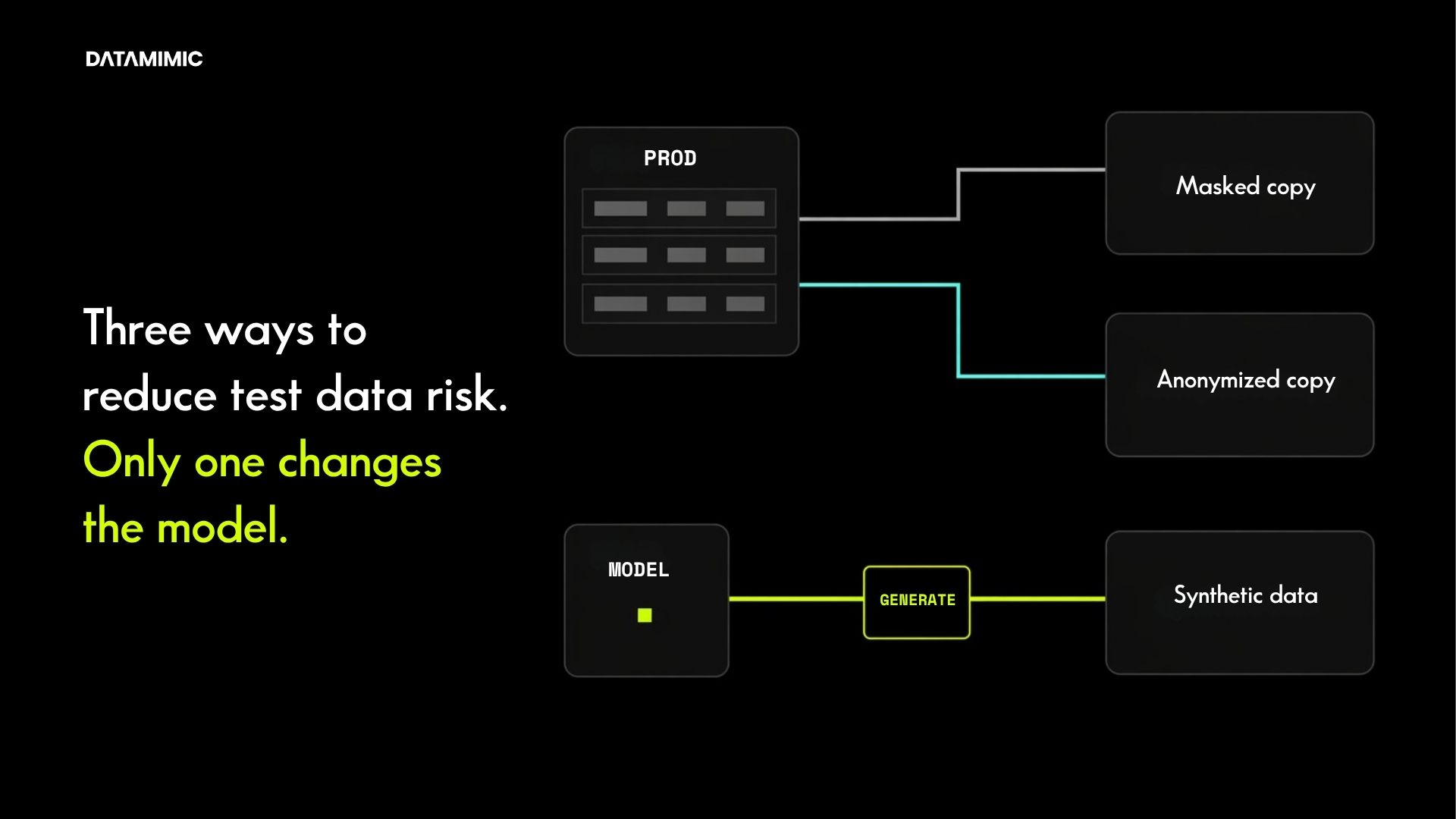

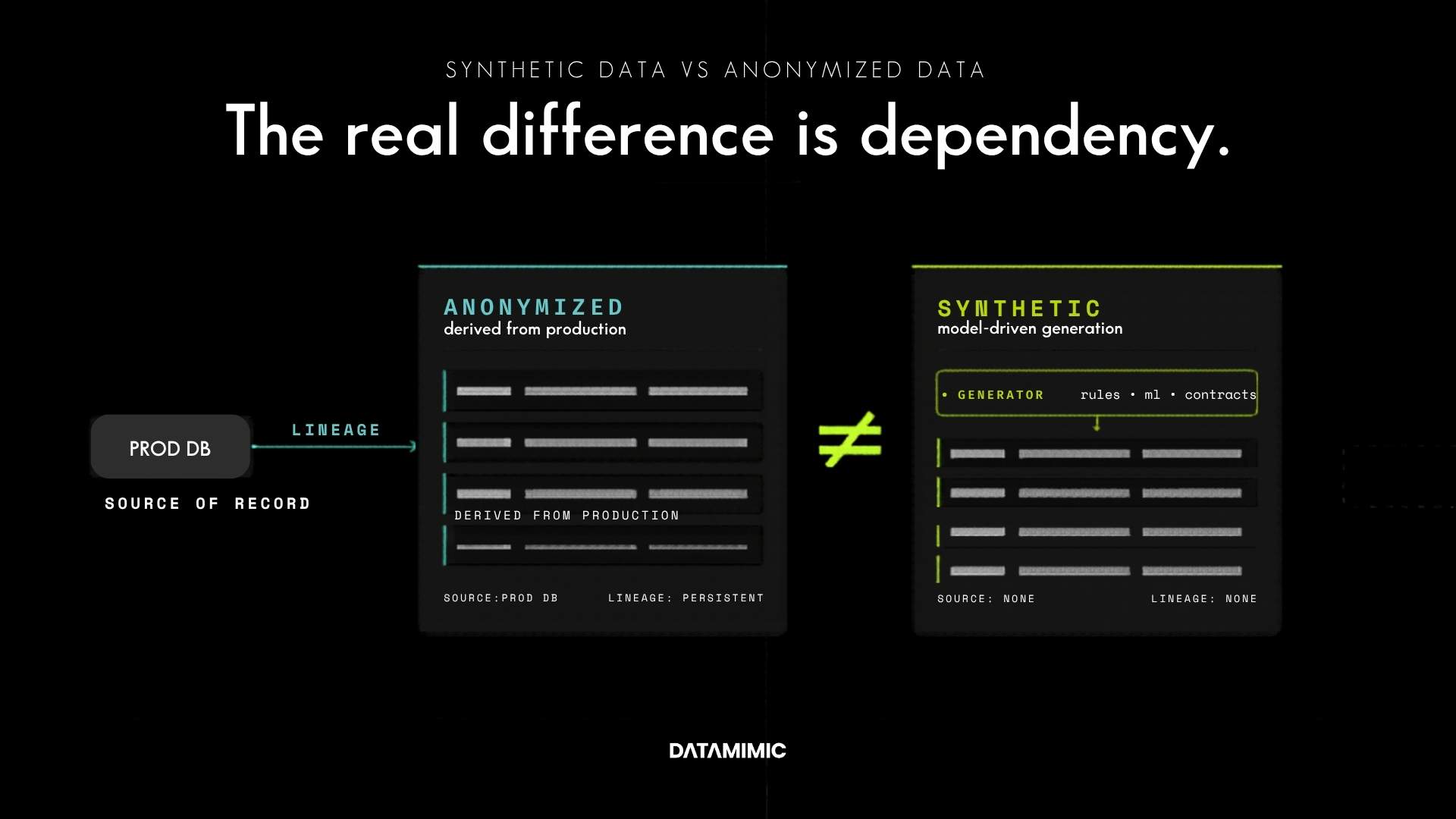

Not every data protection method fits streaming payment workloads equally. It is worth distinguishing upfront: encryption (at rest, in transit, field-level) and access control remain the first line of defense for production payment data. Anonymization and pseudonymization serve a different purpose — making data safe for non-production use cases like testing, analytics, and partner integrations where the original values are not needed. The techniques below focus on that second category. The choice between them depends on whether downstream consumers need format consistency, cross-topic referential integrity, or statistical fidelity.

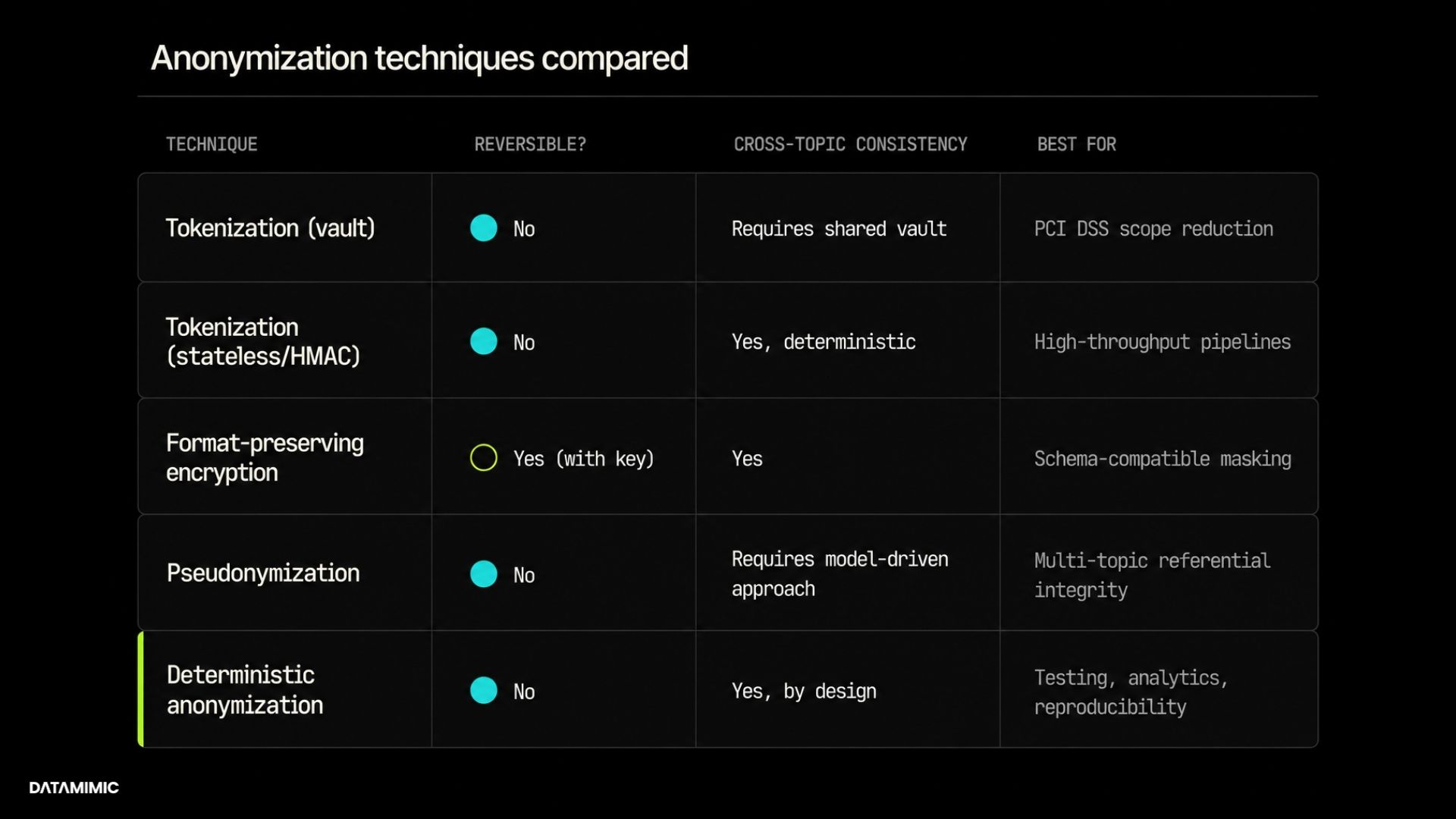

Tokenization

Replaces sensitive values — typically PAN, account IDs, or IBAN — with surrogate token values that are externally non-reversible outside the controlled boundary. In vault-based implementations, a centralized token store maintains the mapping, which introduces lookup latency. However, stateless alternatives exist: HMAC-based pseudonymization and deterministic cryptographic tokenization can generate consistent tokens without a centralized vault, trading reversibility for throughput. Best suited for PCI DSS data masking where the original value must never be recoverable outside the controlled boundary.

Format-Preserving Encryption (FPE)

Related to but distinct from tokenization. FPE applies encryption while preserving the data structure — a 16-digit PAN encrypts to another 16-digit string that preserves field length and, in some implementations, checksum validation such as Luhn compatibility. Downstream systems continue to function without schema changes. Security architects classify FPE as encryption (reversible with the correct key), not anonymization — an important distinction for compliance scoping, since encrypted data may still fall under PCI DSS scope depending on key management.

Pseudonymization

Replaces identifiers with synthetic equivalents while preserving referential integrity across topics. If a customer appears in payments, orders, and service-events topics, the pseudonymized customer ID must be consistent across all three. Without this, joins downstream break and analytics become meaningless.

This is where most homegrown masking scripts fail. They handle single-topic anonymization but lose consistency the moment data spans multiple streams — which, in any real payment system, it always does.

Field-Level Masking

Applies masking rules per field, typically at the consumer or proxy layer. Different consumer groups see different levels of masking: the analytics team sees masked PAN and redacted names, the fraud detection service sees tokenized account IDs with preserved transaction amounts, the QA environment sees fully synthetic records.

The advantage is flexibility. Different consumer groups can receive different visibility levels into the same payment event stream without duplicating infrastructure. This approach is commonly used in PCI DSS data masking programs where analytics, fraud detection, operations, and QA teams each require different levels of access to payment data. The risk is policy sprawl — managing per-consumer masking rules across dozens of topics and consumer groups requires centralized policy governance, not per-application configuration.

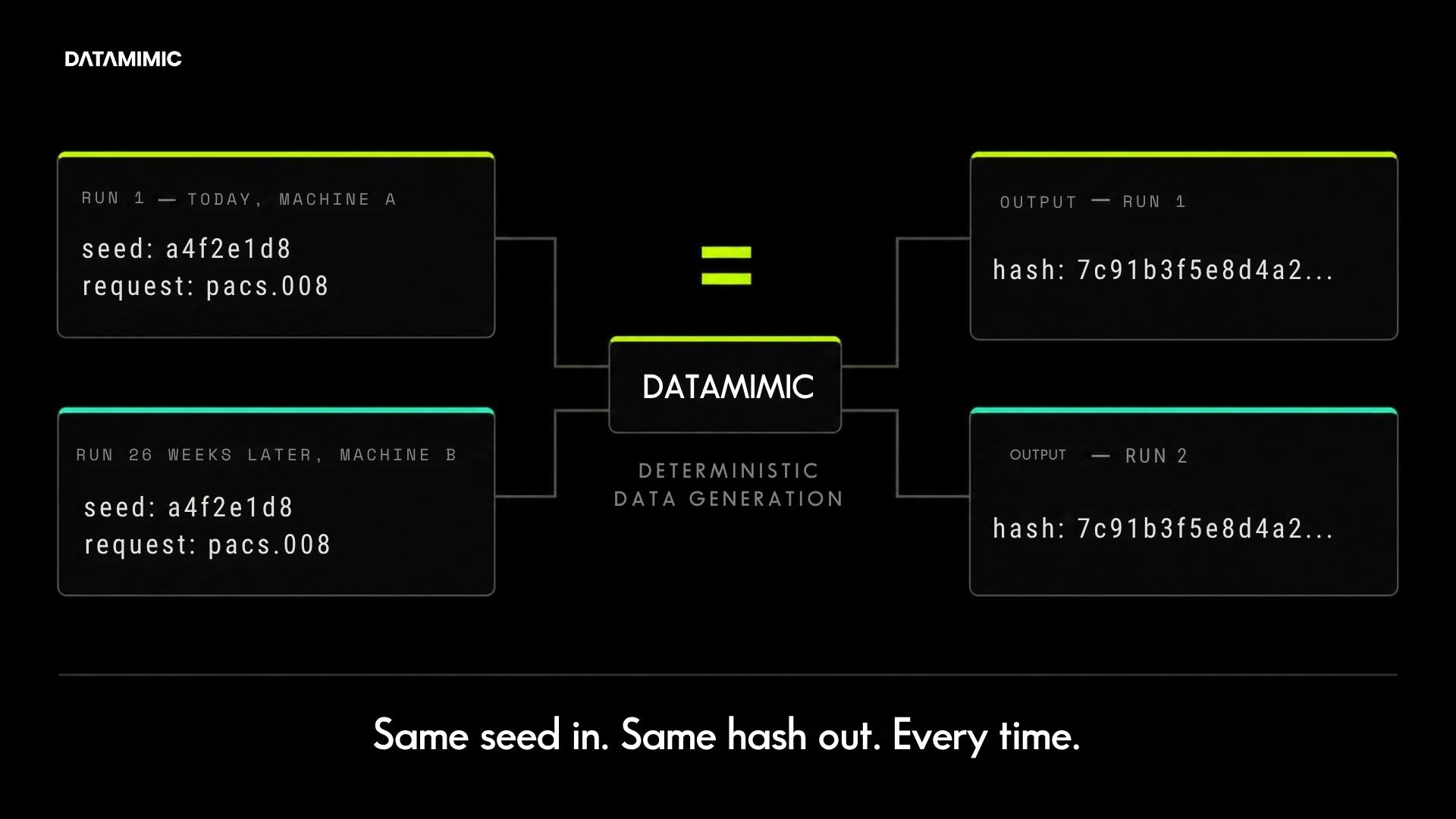

Deterministic Anonymization

Same input, same anonymized output. Every time, every environment, every machine. A seeded anonymization function applied to a PAN on Monday produces the same token on Friday, on a different host, in a different CI pipeline run.

This property is non-negotiable for teams that need reproducible test datasets, audit traceability, and regression testing against consistent anonymized data. Statistical or random anonymization breaks all three. In practice, deterministic Kafka PII masking is often required wherever downstream analytics, reconciliation, replay testing, or cross-topic joins depend on referential consistency.

For Kafka-based payment systems, deterministic anonymization is the baseline. The harder problem is combining it with cross-entity referential integrity, domain-aware generation for regulatorily defined field formats (IBAN check digits, SWIFT field lengths, EDIFACT segments), and a verifiable audit trail. That combination is where most homegrown approaches and single-purpose tools fall short.

What Real-Time Data Masking Requires in Production Pipelines

Applying these techniques in a production Kafka pipeline imposes four architectural requirements that separate real-time data masking tools from batch-oriented solutions:

- Latency: Anonymization must happen in-stream without adding meaningful processing delay. For payment pipelines handling pacs.008 (ISO 20022 credit transfer message) or SWIFT MT messages at thousands of transactions per second, the anonymization layer must not introduce meaningful processing delay in high-throughput payment pipelines.

- Cross-topic consistency: Anonymized values must stay consistent across Kafka topics and downstream consumers. A tokenized customer ID in the payments topic must resolve to the same token in the orders topic. Without this, referential integrity across the event stream is destroyed.

- Auditability: Every anonymization run must be traceable — task ID, model version, timestamp, content hash. PCI DSS 4.0 requires detailed records of data access activities. DORA requires traceability and reproducibility of data used in resilience testing. Anonymization that cannot prove what it did, when, and with which configuration creates audit difficulties under PCI DSS 4.0 logging requirements and DORA traceability expectations.

- Deployment constraints: For many Tier-1 banks and payment processors, data cannot leave the controlled environment. While regulated institutions increasingly adopt managed services like Confluent Cloud, AWS MSK, or hybrid sovereign cloud deployments, the anonymization layer itself often must run on-prem or air-gapped — particularly for institutions operating under DORA or national data sovereignty requirements. These deployment requirements are increasingly driven by streaming data compliance obligations and cross-border data governance rules. The ability to deploy without cloud dependencies remains a hard requirement for a significant portion of the market.

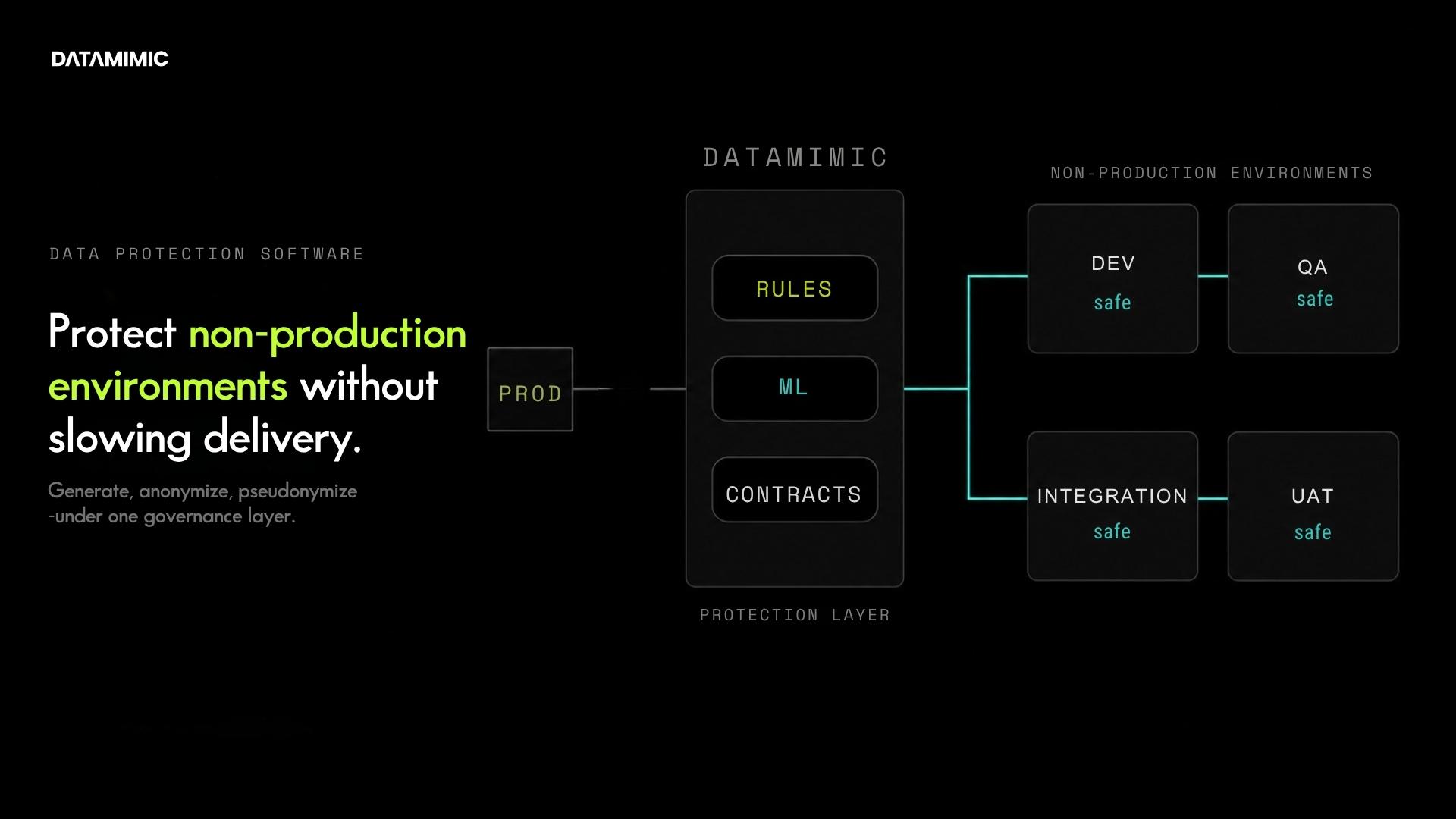

About DATAMIMIC

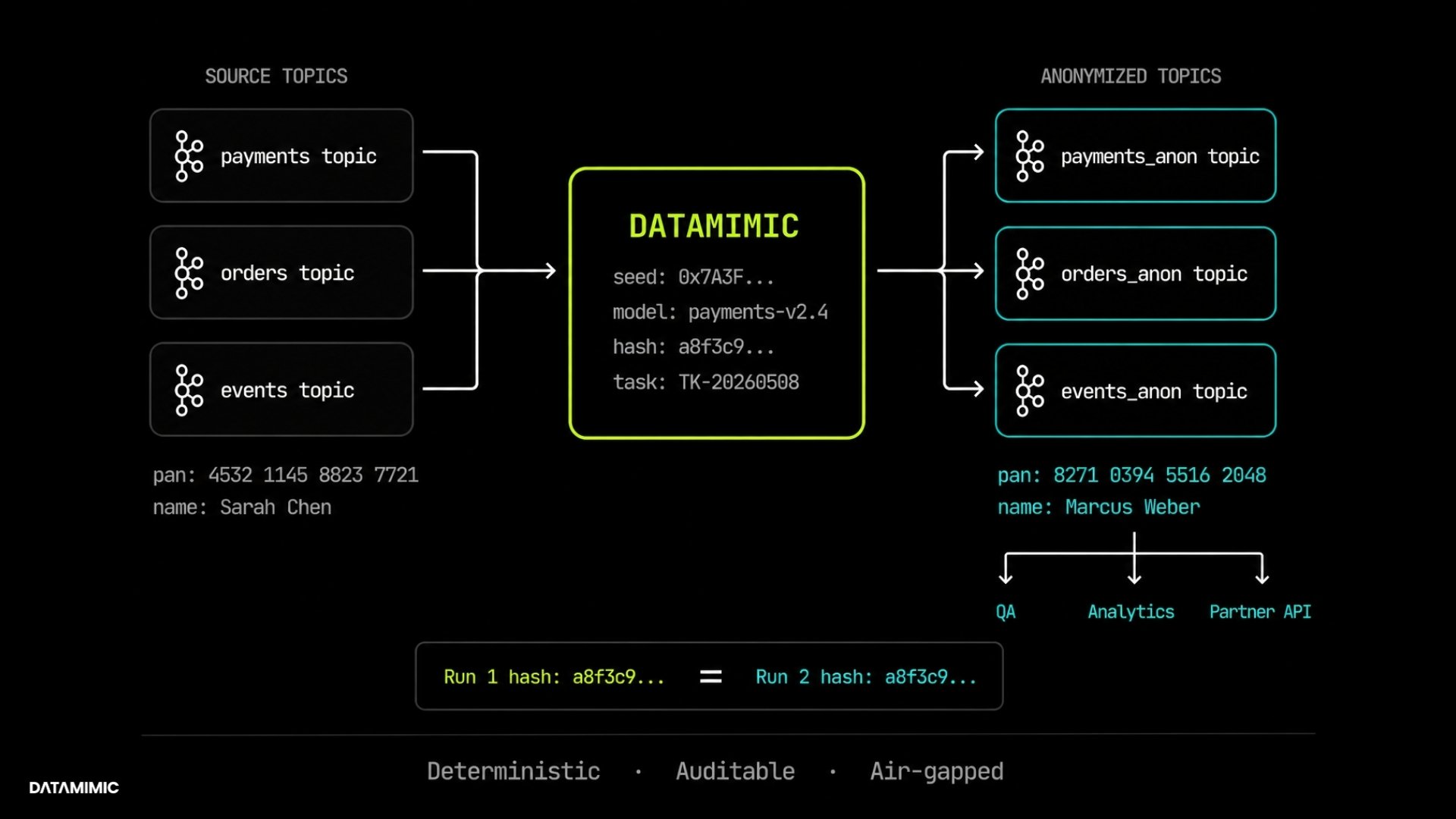

DATAMIMIC is a model-driven, deterministic-first test data platform for regulated enterprises in banking, insurance, and the public sector. It addresses the requirements outlined above through four capabilities:

- Deterministic, rules-based generation: PCI-governed fields — PAN, IBAN, SWIFT MT fields — are anonymized using rules-based generators with deterministic seeds. Same seed, same DSL, same worker count produces byte-identical output across hosts and across time. Independently verifiable through deterministic seeding and content hashing.

- Cross-topic referential integrity: Anonymized identifiers stay consistent across multiple Kafka topics through model-based generation. When a customer ID is pseudonymized in payments, the same pseudonym appears in orders, events, and any downstream consumer.

- Audit contracts: Every generation run is logged with task ID, model version, and content hash. When proof is missing, the system blocks the operation — no silent fallback.

- On-prem, air-gapped deployment: Fully offline via Helm chart or Podman. No mandatory external connectivity or cloud dependencies.

The ACI Worldwide deployment covered real-time anonymization of streaming payment data across Kafka topics in a Tier-1 payments environment, with PCI DSS alignment, full audit traceability, and the throughput required for production-grade payment volumes. The full case study is available at datamimic.io/case-study/aci-worldwide.

Getting Started

For architects and engineers: technical documentation at docs.datamimic.io covers Kafka connector configuration, model-based generation, and API integration. DATAMIMIC is also available on GitHub and PyPI for hands-on evaluation.

For decision-makers: the ACI Worldwide case study documents how real-time Kafka anonymization was implemented for a global payment processor.

If your team is working on streaming data compliance for payment pipelines and struggling with cross-topic consistency or audit traceability, we’re happy to walk through the architecture in practice.

FAQ

How do you anonymize PAN data in Kafka without breaking referential integrity across topics?

Cross-topic referential integrity requires the same input to produce the same anonymized output across every topic where it appears. Stateless approaches like HMAC-based pseudonymization with a shared key achieve this for single fields. Domain-specific cases — IBAN check digits, SWIFT field lengths, EDIFACT segments — need rule-based generators that preserve structural validity while keeping the mapping deterministic across the entire event stream.

Is format-preserving encryption the same as tokenization for PCI DSS?

No. Format-preserving encryption (FPE) is reversible with the correct key — security architects classify it as encryption, which keeps the data in PCI DSS scope depending on key management. Tokenization replaces values with surrogates that are externally non-reversible outside a controlled boundary, which can take the data out of scope. Both preserve format. They differ in reversibility, scope implications, and audit treatment.

Can you delete data from a Kafka topic to comply with GDPR right-to-erasure?

Not directly — Kafka’s append-only log makes record-level deletion impractical. Teams in practice address this through crypto-shredding (destroying encryption keys to render data unreadable), tombstone events on compacted topics, retention minimization, and pseudonymization at the producer side. None of these are trivial in high-throughput streaming environments. The choice depends on retention requirements, downstream consumer dependencies, and audit obligations.

How do you generate consistent test data for Kafka payment topics?

In DATAMIMIC, a single generate block can write to multiple targets in one run — Kafka, MongoDB, JSON, CSV, or any combination. The model is the source of truth; targets are projections of it. Same seed, same content, byte-identical across every sink:

<setup numProcess="10">

<kafka-exporter id="kafkaSsl" topic="payments.v1" partition="0"/>

<generate name="payment_event" count="30000000"

target="kafkaSsl,JSON,LogExporter" cyclic="true">

<key name="id" generator="IncrementGenerator"/>

<variable name="person" entity="Person"/>

<key name="customerName" script="person.name"/>

<key name="email" script="person.email"/>

</generate>

</setup>

No coordination layer, no re-keying, no downstream join logic. The Kafka topic, the JSON file, and the log receive the same record stream from the same model.

What does a deterministic test data run actually prove for a regulator?

Each DATAMIMIC run is logged with a task ID, engine version, per-statement status, planned-vs-exported counts, and timestamps — machine-parseable and reproducible from the seed. A run produces lines like:

Task ID: 9f5d61e7-f125-441e-a775-cd99c55c7b7e

HEALTH | client=source | type=rdbms | status=ok | latency_ms=30

Summary | CUSTOMER: planned 10 · exported 10 in 0.301s

CONTROL | kind=stmt_end | stmt=CUSTOMER | status=ok

CONTROL | kind=end | status=ok

If a fallback occurs — a missing locale file, a dataset substitution — it is logged explicitly. No silent fallback. That auditability directly addresses PCI DSS 4.0 logging requirements and DORA traceability expectations.

Alexander Kell

May 13, 2026

Contact Us Now

Facing a challenge with your test data project? Let’s talk it through. Reach out to our team for personalized support.

We’ve received your submission and will be in touch shortly